GSA SER Verified Lists Vs Scraping

Understanding the Foundation of GSA Search Engine Ranker

Anyone who works with GSA SER knows that link quality depends almost entirely on the target URL list you feed it. Without a steady stream of platforms that accept submissions, even the best-tuned campaign stalls. The debate between using GSA SER verified lists vs scraping your own targets from scratch defines how efficiently you build links and how clean your backlink profile remains.

What Are GSA SER Verified Lists?

Verified lists are pre-packaged collections of URLs where GSA SER has already successfully registered, submitted, or posted at least once. Sellers or communities run these targets through live verification engines, often across multiple server instances, then compile only the URLs that returned a confirmed “verified†status. You import them, and the software immediately has fresh, working platforms.

Core characteristics

- Pre-screened for engine compatibility (WordPress, Joomla, Drupal, Article directories, forums, etc.)

- Often categorized by platform type, PageRank, or domain authority

- Include footprint-based filters that reduce dead domains and spam-flagged IPs

- Updated frequently (daily or weekly) to maintain high success rates

What Does Scraping Your Own Lists Involve?

Scraping means collecting target URLs manually or with automated tools by searching for platform footprints, competitor backlinks, or niche-specific sources. You use scraping software, custom search engine queries, and sometimes proxy rotators to gather massive raw lists, which you then run through GSA SER’s own verification routines.

Typical scraping workflow

- Craft footprint strings (e.g., "powered by wordpress" + "leave a reply") and import them into a scraper.

- Harvest URLs from Google, Bing, and niche search engines through proxied requests.

- Clean duplicates, remove obvious spam, and filter by OBL (outbound link count).

- Load the raw list into GSA SER and let the software test and verify over several days.

Head-to-Head: GSA SER Verified Lists vs Scraping

The choice between GSA SER verified lists vs scraping is not about which method is “better†in a vacuum — it is about matching your goals, time, and resources to the right approach. Below is a detailed comparison across the factors that matter most.

Speed of deployment

- Verified lists: Instant. You download, import, and start seeing submissions within minutes because the targets are already confirmed working.

- Scraping: Slow. Harvesting 100,000 URLs might take hours, but verifying them natively inside GSA SER can add days of runtime before a usable list emerges.

Verification success rate

- Verified lists: Typically 60–90% success on first use, depending on list freshness. Since they’ve been pre-verified, the waste is minimal.

- Scraping: Raw scrapes often deliver a 5–20% initial success rate. The rest are admin panels, closed registrations, or honeypots that burn threads and proxies.

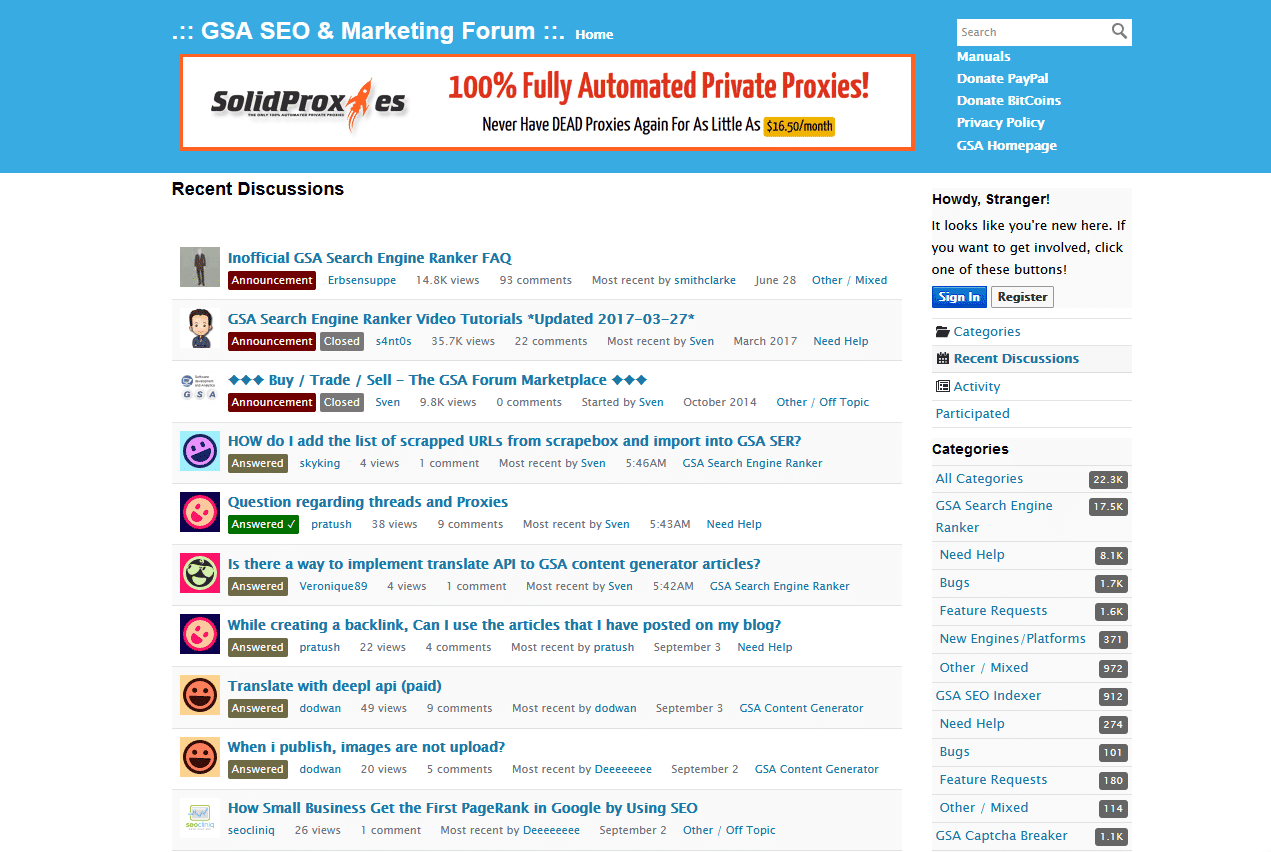

more info

Uniqueness and footprint

- Verified lists: Used by hundreds of other buyers. Your backlink profile can look identical to many others, increasing the risk of deindexing.

- Scraping: Highly unique if you craft custom footprints and target specific niches. Ideal for tier 1 properties and clients who demand clean, diverse link profiles.

Cost efficiency

- Verified lists: Paid subscriptions can range from $30 to $150 per month, but they save enormous time and proxy/VPS costs tied to verification.

- Scraping: Tools like Scrapebox cost a one-time fee, but you’ll spend heavily on proxies, captcha solvers, and dedicated VPS hours while GSA SER verifies raw lists.

Control and customization

- Verified lists: You get what you get. While you can filter by engine inside GSA SER, the base targets are fixed.

- Scraping: Full control. You can restrict languages, TLDs, IP ranges, and thematic relevance before a single URL touches your campaign.

When to Rely on Verified Lists

Use curated, pre-verified targets when speed and volume are critical. This is especially true for lower-tier links (tier 3 and beyond) where uniqueness matters less than rapid indexing power. Big link blasts, expired domain repurposing, and mass comment campaigns all benefit from a steady drip of verified platforms that won’t choke your proxy queues.

When a Custom Scraping Strategy Wins

Manual scraping or footprint-based harvesting makes sense for high-value tiers. If you’re building links directly to a money site or a tightly managed tier 1 buffer, you need platforms that competitors haven’t already pounded into oblivion. Custom lists let you target low-OBL domains, specific CMS versions, and regional TLDs, creating a far more natural backlink footprint that survives algorithm changes.

Hybrid Approach: The Sweet Spot

Many experienced GSA SER users don’t treat GSA SER verified lists vs scraping as an either/or choice. They mix both:

- Use daily verified lists to supply bulk, fast-verifying engines for tier 2 and 3 volume.

- Scrape niche footprints once a week, building a private reservoir of unique targets for tier 1 projects and client work.

- Re-verify and clean scraped lists before every campaign, keeping the database alive and reducing dead-target accumulation.

FAQs About Verified Lists and Scraping

Can I scrape and then sell my own verified list?

Yes, many list vendors start exactly this way. You need a reliable proxy infrastructure, multiple GSA SER instances for parallel verification, and a routine schedule. Quality control is critical — one broken footprint can ruin the whole batch.

Do verified lists work with all GSA SER engines?

Most reputable sellers tag their lists per engine family. However, no list covers every possible engine. Always check the list notes to ensure it includes the platforms you’ve activated in your project options.

Is scraping safer from a penalization standpoint?

Scraping itself is neutral, but the resulting backlink profile is often safer because you can avoid overused domains. When your targets come from scrape-to-private-list workflows, GSA SER verified lists vs scraping comparisons typically show that custom lists generate far fewer “unnatural link†footprints across shared IP ranges.

How often should I replace my lists?

For verified lists, daily updates are ideal if volume is your priority. A week-old list may already be 30% dead. Scraped lists can last months if you clean them periodically, but running a fresh harvest every few weeks captures newly created sites that are easier to register on.

What’s the biggest mistake when combining both methods?

Failing to separate tiers. Don’t blast your scraped tier-1 list with the same settings you use for verified tier-3 targets. The uniqueness advantage evaporates if you spam low-quality engines onto your best hand-picked domains.

Real command of the GSA SER verified lists vs scraping dynamic means applying the right list to the right job. Automated tools thrive on bulk, but sustainable rankings grow from carefully selected targets that nobody else is hammering. Build a workflow that does both, and you’ll spend less time babysitting dead URLs and more time watching your graphs climb.